How Intelligent is Artificial Intelligence?

Much is said about artificial intelligence. Before discussing whether it will save or destroy us, we should clarify what we mean when we call a machine “intelligent.”

What is intelligence?

The Latin word intelligentia comes from inter (between) and legere (to gather, to pick out) — so something like “to untangle” or “to analyse.” That is a useful starting point. Intelligence, in this sense, is about making distinctions, finding patterns, separating signal from noise.

The standard textbook definition from Russell (2010) puts it this way:

Intelligence is concerned mainly with rational action […] Ideally, an intelligent agent takes the best possible action in a situation.

This can be formalised. If we want to find the best action $a^*$ from all possible actions, we look for the one with the highest expected utility given a certain state $s$:

$$ a^* = \underset{a \in \text{Actions}}{\text{argmax}} \ E(\text{Utility}(\text{Result}(a, s))) $$

This formula looks tidy. The trouble is in the details.

The parts we understand

We have made progress on several components of this formula:

- State estimation — the $s$: understanding what situation we are in

- Modelling outcomes — the $\text{Result}(a, s)$: predicting what happens when we take an action

- Search — the $\text{argmax}$: efficiently finding the best option among many

- Probabilistic reasoning — the $E$: dealing with uncertainty

These are difficult problems, and the solutions work.

The breadth of AI

Artificial intelligence is a broad field. It encompasses classical rule-based systems, search algorithms, constraint satisfaction, planning, and various forms of machine learning — from decision trees and support vector machines to neural networks and reinforcement learning.

Large language models (LLMs) receive most attention today. They excel at natural language processing, conversation, and — to some degree — reasoning. But they are one technique among many. Computer vision, robotics, game playing, and optimisation often rely on different approaches, sometimes combined. A neural network is, at its core, a function that takes inputs and produces outputs through layers of weighted sums:

$$ \sum_{i=1}^{m} w_i x_i + \varepsilon $$

Stack enough of these layers, train them on enough data, and you get systems that can recognise faces, translate languages, or generate images from text descriptions.

“A sea otter with a pearl earring” by Johannes Vermeer — generated by DALL-E (2022)

“A sea otter with a pearl earring” by Johannes Vermeer — generated by DALL-E (2022)

Same prompt — generated by Google Gemini (2026)

Same prompt — generated by Google Gemini (2026)

The part we do not

But what about the Utility function? What makes an outcome good?

Here the difficulties begin. Norbert Wiener, one of the pioneers of cybernetics, wrote in 1960:

We had better be quite sure that the purpose put into the machine is the purpose which we really desire.

The word “desire” deserves attention. It is too weak. Our desires are often shallow, inconsistent, or wrong. We desire convenience, but we value privacy. We desire cheap goods, but we value fair working conditions. We desire engagement, but we value truth.

You cannot simply ask people what they want. Research on organ donation shows this clearly: whether someone becomes a donor depends heavily on whether the default is opt-in or opt-out. Austria and Germany are culturally similar, but their donation rates differ dramatically because of this administrative detail. People avoid difficult choices when they can.

The Clever Hans problem

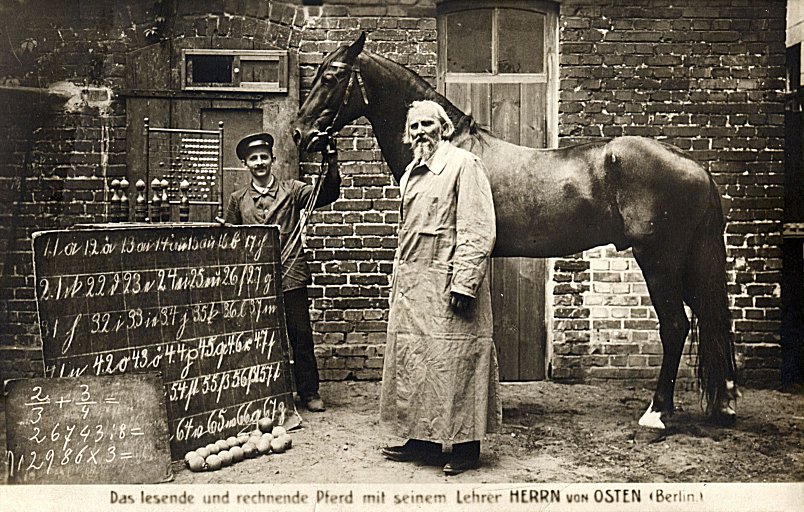

Clever Hans (1895-1916): the horse that seemed to solve arithmetic problems

Clever Hans (1895-1916): the horse that seemed to solve arithmetic problems

There is a famous story about a horse named Clever Hans who could apparently solve arithmetic problems. He would tap his hoof the correct number of times. It turned out he was not doing maths at all — he was reading subtle cues from his trainer’s body language.

Modern AI systems can be Clever Hans too. Lapuschkin (2019) call this “Clever Hans predictors”:

In the worst case, the trained model does not learn a valid and generalizable strategy to solve the problem it was trained for, and becomes a ‘Clever Hans’ predictor that bases its decisions on spurious correlations in the training data.

The system appears intelligent, but it has learned the wrong thing. It has found a shortcut that works in the training environment but fails in the real world.

From choice architects to choice makers

Thaler (2009), in their book Nudge, talk about “choice architects” — the people who design the context in which we make decisions. The government official who decides the default on a form. The doctor who presents treatment options in a certain order.

With AI assistants, we are moving from choice architects to choice makers. The machine does not just present options; it decides for us. It filters our news, recommends our purchases, suggests our routes.

In principle, such assistants could help us act according to our values even when we are tired or distracted. In practice, the companies building these systems want to make money. They optimise for engagement, conversion, retention. These metrics do not necessarily align with what is good for us.

Peter Norvig, in his talk As We May Program, puts it sharply: when we optimise for click rates as a measure of preference, we end up spreading “a virus that promotes short term wants over society’s real needs.” The system learns to give us what we click on, not what is good for us.

What would a values-aligned AI look like?

None of these people exist. Generated by StyleGAN (Karras (2019)) — already impressive back then.

None of these people exist. Generated by StyleGAN (Karras (2019)) — already impressive back then.

We do not know.

Utilitarians like Bentham and Mill tried to reduce ethics to maximising utility — but never defined what “utility” means. Pleasure? Preference satisfaction? Objective well-being? The formula $\underset{a}{\text{argmax}} \ E(\text{Utility}(a))$ is only as good as our definition of utility.

Aristotle, Kant, and others developed sophisticated frameworks for ethical reasoning. Translating their insights into code remains an unsolved problem.

Most people share basic moral intuitions about fairness, harm, and reciprocity. But intuitions conflict, and they can be manipulated.

Yoshua Bengio’s LawZero initiative proposes one direction: rather than building agentic systems that imitate human behaviour (including deception), develop “Scientist AI” — systems trained to understand and explain, not to pursue goals autonomously. The core principle: “The protection of human joy and endeavour” should guide every frontier AI system. Whether this approach scales remains to be seen.

Three observations

On transparency. If an AI system makes decisions that affect our lives, we should understand how it works. Modern deep learning models are difficult to interpret. This does not exempt us from the requirement. The EU AI Act, in force since 2024, takes this seriously: high-risk AI systems must be designed for transparency, with clear documentation of capabilities, limitations, and potential risks. Penalties for non-compliance can reach 7% of global turnover.

On defaults. The choices AI systems make by default shape behaviour at scale. These defaults should be set deliberately, not merely optimised for engagement.

On humility. Alan Turing, in 1951: “Even if we could keep the machines in a subservient position, for instance by turning off the power at strategic moments, we should, as a species, feel greatly humbled.”

We are building systems that are powerful but not wise. This is not a reason to stop. It is a reason to proceed with care.

This post draws on material from a talk I gave at the PPW VIU Conference in 2022 and an unpublished essay from September 2017.

References

- Russell, Stuart and Norvig, Peter. (2010). Artificial Intelligence: A Modern Approach. Pearson.

- Lapuschkin, Sebastian and Wäldchen, Stephan and Binder, Alexander and Montavon, Grégoire and Samek, Wojciech and Müller, Klaus-Robert. (2019). Unmasking Clever Hans predictors and assessing what machines really learn. Nature Communications.

- Thaler, Richard H. and Sunstein, Cass R.. (2009). Nudge: Improving Decisions About Health, Wealth and Happiness. Penguin.

- Johnson, Eric J. and Goldstein, Daniel. (2003). Do Defaults Save Lives?. Science.

- Karras, Tero and Laine, Samuli and Aila, Timo. (2019). A Style-Based Generator Architecture for Generative Adversarial Networks. ↗